Data Engineering Services

Transform data into actionable insights with seamless integration and automation

Our expertise in data management and data governance transforms complex data into high-quality, actionable insights. We break business silos and build robust data infrastructures that scale.

Contact Us

Our Data Engineering Services

Customized Data Solutions

Every business has unique data challenges. Our data engineering services are tailored to address your specific needs — from enhancing existing operations to building new data infrastructure from scratch.

Building Robust Data Infrastructure

We configure production-grade cloud and PaaS data environments. Whether starting new or scaling existing databases, we ensure seamless setup and integration tailored to your data demands.

Versatility Across Cloud Platforms

We work across AWS, Azure, and Google Cloud, leveraging the best platform for your needs. From Amazon Redshift to BigQuery and Azure Synapse, we ensure optimal data processing.

Optimizing Data Processing

We design efficient ETL pipelines that extract, transform, and load data at scale. Our processes handle both structured and unstructured data with rigorous validation.

Data Governance and Management

We implement governance frameworks covering accuracy, consistency, and compliance with international standards. Your data stays accurate, secure, and actionable.

Seamless Data Integration

Comprehensive integration of your data sources - from ERP to CRM - providing a unified view that supports consistent and reliable analytics across departments.

AI-Ready Pipelines & Agent Integrations

We extend the same data engineering foundation to power AI agents: governed datasets, semantic layers, retrieval indexes, and tool APIs that let LangGraph, Vertex AI, or autonomous agents act on your data without hallucinating. Part of our AI agent development practice.

TESTIMONIALS

Witanalytica has been an awesome team to work with. They have such a talented team with a broad range of expertise in software development, BI and data analysis - which have all been instrumental in helping us achieve our technical goals. We truly value their partnership and look forward to continuing to work together.

Gregg Bansavage

CIO, RBW Logistics

Witanalytica has been an excellent partner in managing and optimizing our Tableau environment. Their team’s technical expertise and proactive support have streamlined our reporting processes, improved dashboard performance, and provided valuable insights to our business. Their responsiveness and deep understanding of data analytics make them a trusted extension of our own team.

Mark Lack

Director of Data Analytics and AI, The Ubique Group

Witanalytica helped us transition from Excel to a dynamic dashboard, allowing us to view all the relevant data and the KPIs that we track as a business. Instead of having our developers code an interface for weeks, we can now instantly accomplish this process through an interface, eliminating the need for manual coding.

Radu Albastroiu

Startup Founder, masinilacheie.ro

Witanalytica’s expertise in big data engineering and visualization complements our digital media audit and customer analytics services. Collaborating with them allows us to deliver end-to-end analytics solutions and services, without the risks and investments associated with building these capabilities in-house.

Silviu Toma

Senior Partner, Microanalytics

Working with Witanalytica has transformed our approach to reporting. Their expertise in PowerBI enabled us to go beyond the limited capabilities of Excel, allowing us to provide our clients with dynamic and visually captivating PowerBI dashboards. This capability has facilitated rapid testing, iteration, and the collection of customer feedback to improve our platform.

Alin Rosca

Startup Founder, RepsMate

Working with Witanalytica has been a consistently positive experience. They are responsive, professional, and approach every revision with patience and precision. What sets them apart is a strong understanding of supply chain management, inventory planning, and sales operations, which makes collaboration efficient and ensures deliverables align with real business needs. They have also worked effectively across multiple departments in our organization and manage a 6-7 hour time zone difference seamlessly. I would confidently recommend them to any organization seeking a skilled and dependable analytics partner.

Rubin Chen

Supply Chain VP, The Ubique Group

Your Goals, Our Expertise

We start from your strategic objectives and work our way back to the right mix of solutions and technologies, not the other way round.

Book a Consulting CallOur Strategic Approach to Data Engineering

We begin with a thorough understanding of your business needs and BI reporting requirements — identifying key metrics, refresh frequency, and specific reports for decision-making.

We identify data sources and obtain access to underlying systems, collaborating with departments to ensure all relevant data is considered.

We extract data from sources, transform it to fit required formats, and load it into databases, data lakes, and data warehouses — handling structured and unstructured data.

We test for retroactive data updates to ensure integrity, performing upserts or replacements to maintain accurate, up-to-date datasets.

We focus on creating reusable data assets for multiple departments — standardized datasets, dashboards, and BI tools that maximize value.

APIs and web interfaces for easy access to data products through reports, endpoints, files, or emails — ensuring data availability for all stakeholders.

Regular updates, performance optimization, and compliance with governance standards to maintain a high-quality, high-availability data environment.

We begin with a thorough understanding of your business needs and BI reporting requirements — identifying key metrics, refresh frequency, and specific reports for decision-making.

When Do You Need Our Data Engineering Services?

- You face complex data integration challenges across multiple systems.

- Your data infrastructure needs building or optimizing for scale.

- You require expertise in handling large volumes of data.

- You want to automate data processing and analytics workflows.

- You struggle with data quality and governance challenges.

- You lack in-house expertise in advanced data technologies.

- You plan to migrate to or leverage cloud-based data solutions.

Why Hire Witanalytica for Data Engineering?

Strategically Aligned

We prioritize your strategic objectives to provide data engineering solutions that yield tangible results and measurable business outcomes.

Efficient Delivery

Our agile data teams expedite transformation of third-party data into structured data marts, delivering first results fast.

Competitive Advantage

Governance processes and data quality commitment deliver insights that are accurate, actionable, and set you apart.

Clean, Transparent Data

Comprehensive data preparation with logs and alerts, ensuring data integrity and traceability at every stage.

Cost-Efficient

We prioritize open-source and serverless components, significantly reducing operational costs without compromising quality.

Reusable Data Assets

We create standardized data dictionaries and products designed for reuse across departments, promoting consistency and efficiency.

Our Data Engineering Pricing Models

Transparent pricing built for long-term partnerships, not one-off transactions.

On-Demand Expertise

All tasks are tracked, and the corresponding invoice of the delivered services is billed monthly.

| Activity | Hourly Rate |

|---|---|

| AI Agents Development and Implementation | $100 |

| Data Engineering & Database Administration | $110 |

| Business Intelligence Reporting | $90 |

| Data Science | $120 |

Reserved Capacity Agreement

- Pre-purchase a package of monthly working hours that guarantees reserved capacity and priority availability, regardless of our workload.

- Because this capacity is exclusively allocated to you, unused hours do not carry over to the following month.

| Hours Package | Price |

|---|---|

| Every 50 hours | $4,500 10% savings |

Alternatively, we also offer project-based pricing

For well-defined engagements, we scope the full project upfront and agree on a fixed fee, so you know exactly what to expect.

Technologies

Amazon Web Services

We build data pipelines on Amazon Web Services using services like AWS Lambda for serverless computing, AWS Glue for ETL processes, AWS Lake Formation for data lakes, and Amazon Redshift for data warehousing. These services are known for their scalability, reliability, and integration capabilities.

Microsoft Azure

Our team has hands-on experience with Microsoft Azure data engineering services including Azure Functions for serverless operations, Azure Data Factory for ETL processes, Azure Synapse Analytics, and Databricks for complex analytics and large-scale data processing.

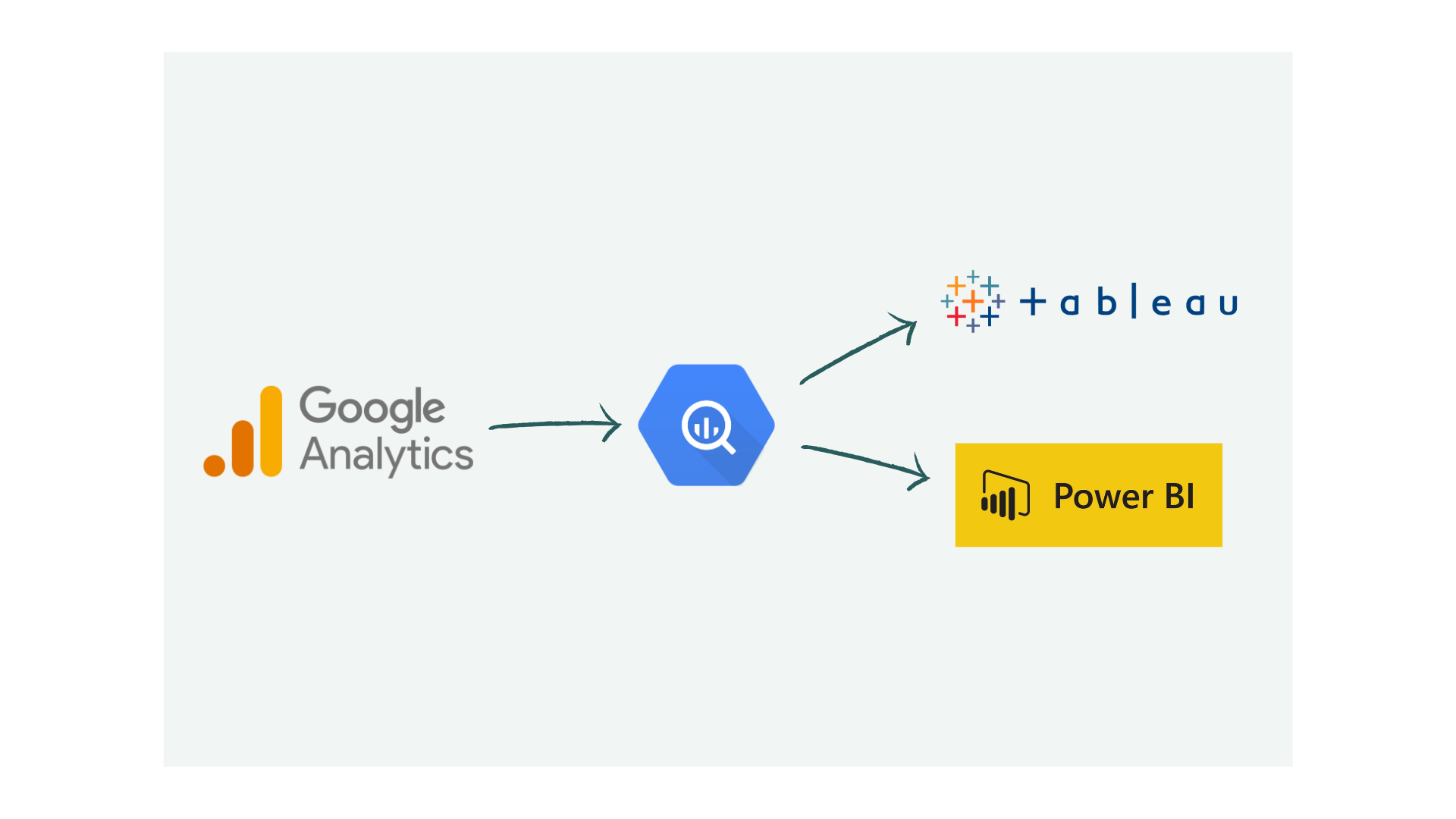

Google Cloud Platform

We design and deploy data engineering solutions on Google Cloud Platform using Cloud Functions for serverless computing, Cloud Dataflow for ETL, and BigQuery for fast data warehousing. GCP integrates well with Google Analytics 4, Looker Studio, and other Google services.

Snowflake

Snowflake is renowned for its innovative data warehousing capabilities, offering seamless scalability and high performance. One of its standout features is the ability to write and execute Python code within its platform using Snowflake Worksheets. This enables data engineers to perform complex data transformations and analytics directly within Snowflake, streamlining workflows and enhancing productivity.

Open Source

Open Source solutions provide flexible and customizable data engineering tools suitable for various business needs. KNIME and the closed source Alteryx are popular for their user-friendly ETL capabilities, allowing businesses to build complex workflows without extensive coding. DBT (Data Build Tool) is favored for data transformation and modeling, enabling teams to manage and deploy analytics workflows efficiently.

Case Studies for Data Engineering Services

Explore real life case studies and see how we delivered measurable outcomes in similar situations.

Showing 13 case studies

3PL Automated Order Entry: From PDF Emails to Deposco via AI

How we built a proof of concept that reads customer order PDFs from email, extracts structured data using AI, and creates orders in Deposco automatically.

Read case study →

Inventory Lot Size Optimization for a Global Industrial Manufacturer

How a global manufacturer freed working capital and recovered warehouse space by optimizing SAP lot sizes using demand variability analysis and a repeatable Alteryx-to-Tableau analytics workflow.

Read case study →

Thanksgiving & Black Friday Sales Analytics: Real-Time Campaign Monitoring

A real-time Tableau dashboard on Salesforce and SQL Server data helped a retailer monitor hourly Black Friday sales and plan next year's strategy.

Read case study →

3PL Digital Transformation: Data Analytics & Automated Invoicing

Discover how a U.S. 3PL company eliminated revenue leakage, automated invoicing, and gained real-time margin visibility.

Read case study →

Affiliate Marketing Dashboards: Unified Performance Tracking

Learn how affiliate teams replaced spreadsheets with unified dashboards to track performance across networks, identify profit leaks, and optimize ROAS.

Read case study →

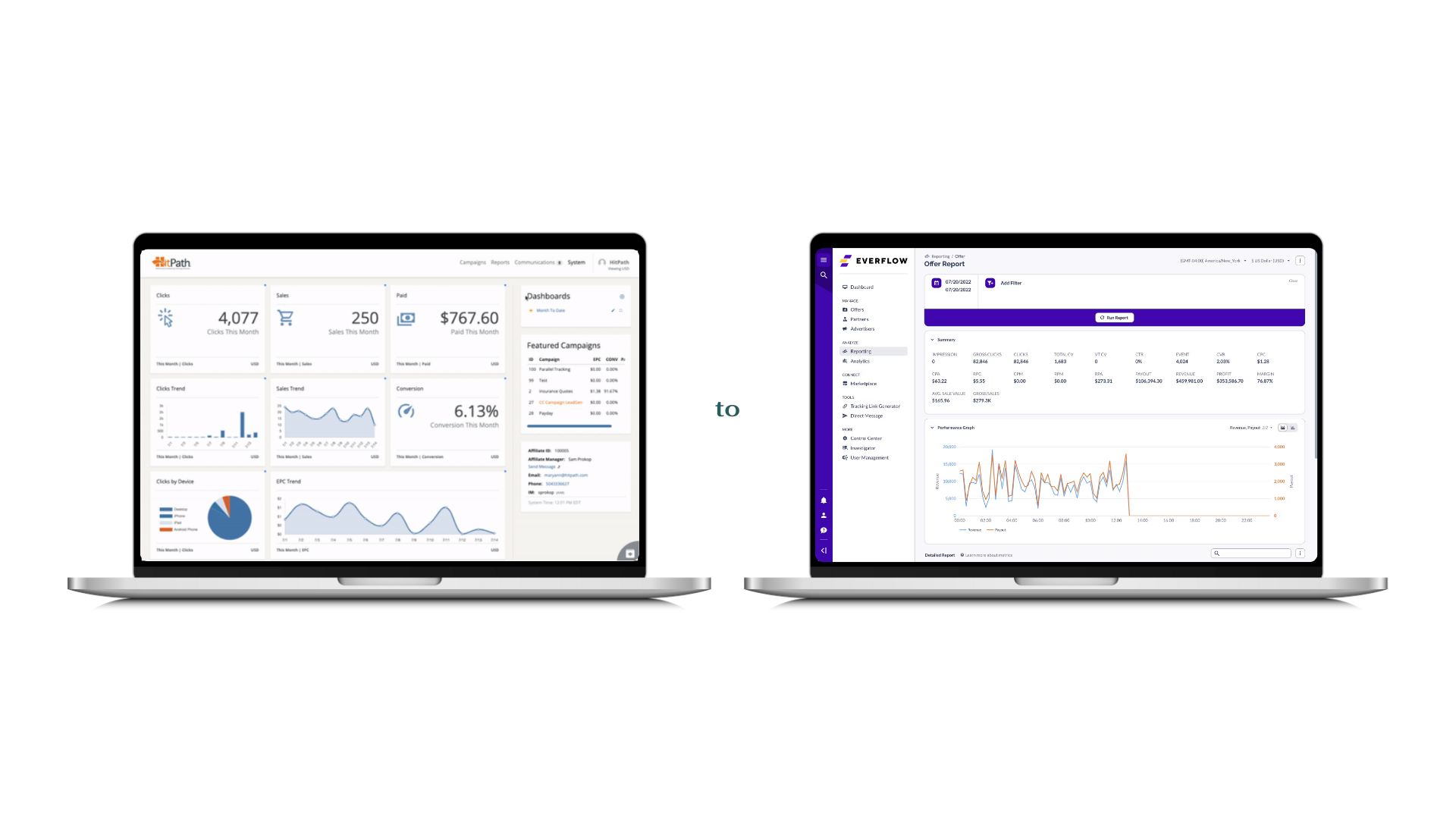

HitPath to Everflow BI Migration for Affiliate Tracking

Unified HitPath and Everflow data into one BI system with migration monitoring dashboards, ensuring reporting continuity throughout the platform transition.

Read case study →

Email Marketing Data Management for Affiliate Publishers

How we helped email marketing publishers manage high-volume campaign data with scalable pipelines, centralized storage, and automated BI reporting.

Read case study →

Multi-Channel Retail Profitability: Amazon vs Wholesale Analytics

A US retailer used Tableau to compare Amazon and wholesale profitability, uncovering margin differences that reshaped their distribution and pricing strategy.

Read case study →

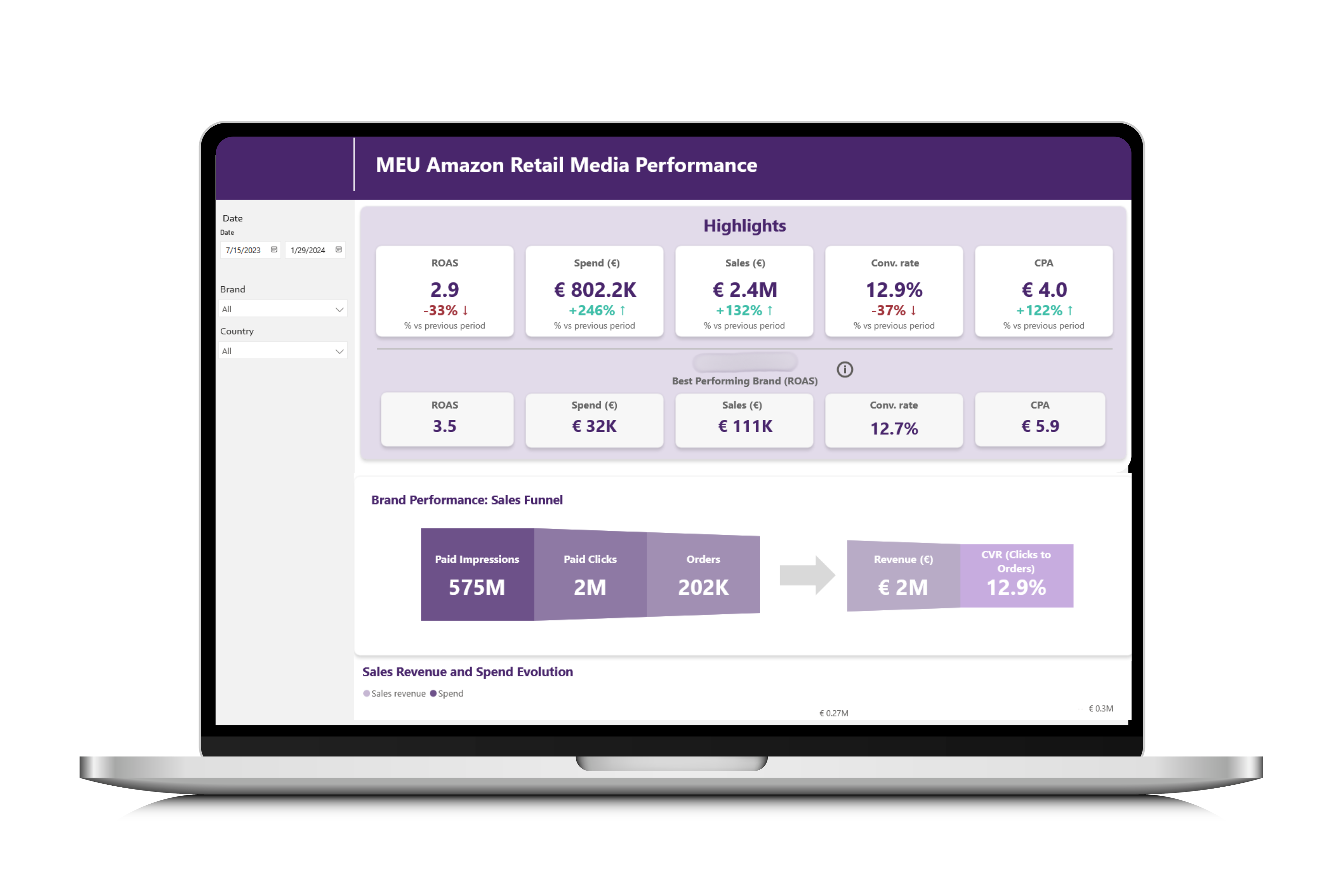

Amazon Ads Reporting with Power BI for Food & Beverage

How a snack manufacturer replaced manual Amazon Ads tracking with automated Power BI dashboards to optimize spend, measure ROAS, and guide marketing decisions.

Read case study →

Healthcare Data Warehouse: Unifying GA4, App Analytics and CRM in BigQuery

How we built a BigQuery data warehouse for a European healthcare clinic, unifying GA4, mobile app analytics, CRM and 10+ paid media platforms to expose onboarding drop-off and enable churn modeling.

Read case study →

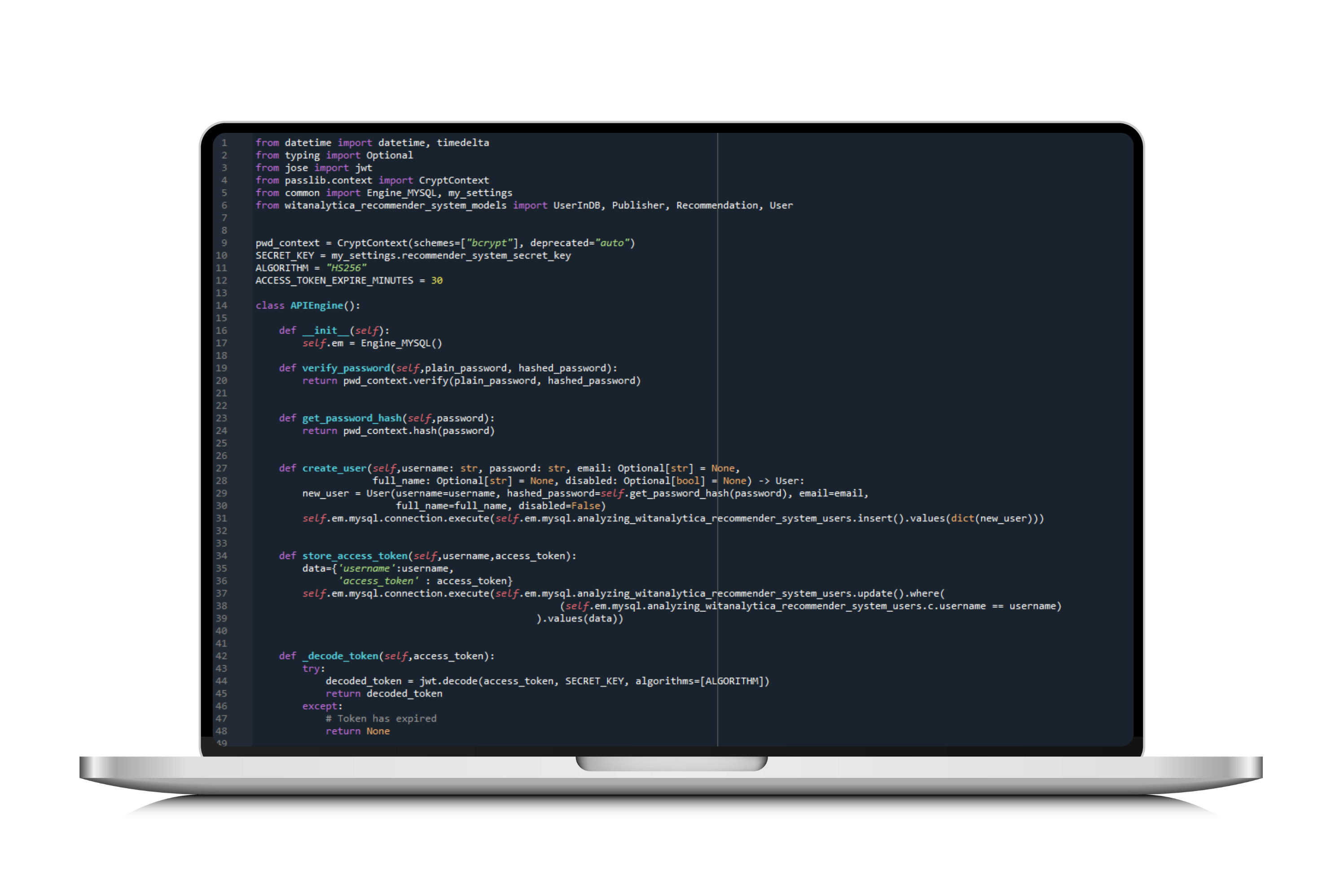

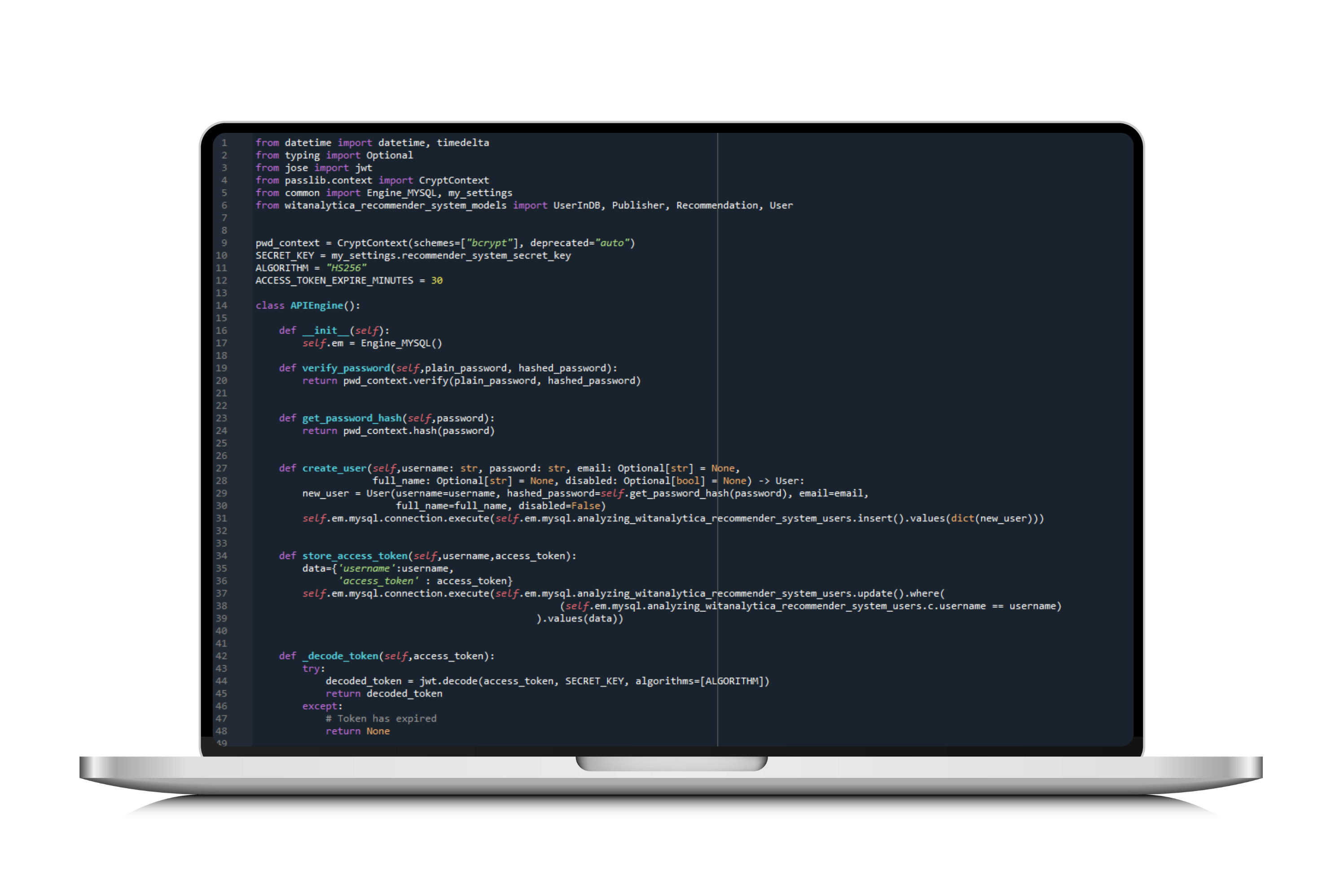

Car Rental Marketplace BI: Startup Analytics with Power BI

We built the analytics stack for a car rental marketplace using MongoDB, Python, MariaDB, and Power BI to centralize operations and enable data-driven growth.

Read case study →

BI Finance Reporting for a Multinational Affiliate Network

Automated multinational financial consolidation for a US affiliate network. Replaced manual spreadsheets with dynamic BI dashboards for revenue and margins.

Read case study →

Marketing Automation with RFM Segmentation for a Coffee Chain

How we helped a coffee shop chain connect POS data, build RFM segmentation, and automate SMS campaigns that reduced churn by 10% and grew revenue 12%.

Read case study →Related Articles

Explore insights and guides related to our data engineering practice.

12 articles

AI Agent Frameworks for Lean IT Teams: How to Choose What You Can Actually Operate

How lean IT teams should evaluate AI agent frameworks by what they can deploy, observe, approve, and maintain in production.

Snowflake in Practice: What We Learned After Using It at Two Clients

An honest review of Snowflake from two data engineers who used it in production. What works well, what is frustrating, and when it makes sense over alternatives.

How to Deploy Data Analytics in Manufacturing: From Shop Floor to Boardroom

A practical guide to deploying data analytics in manufacturing logistics and supply chain, built around the five-tier meeting structure that actually runs modern factories. From shift-level dashboards to multi-plant regional operations, powered by a single source of truth.

GA4 vs Power BI vs Databases: OLTP, OLAP, and Schemas Explained

GA4, Power BI, and BigQuery handle data differently. Understand schemas, OLTP vs OLAP trade-offs, and when to use each type of data product in your stack.

Identity Resolution: Unifying Customer Data for Marketing

Customer data scattered across CRM, email, ads, and web analytics? Identity resolution unifies fragmented profiles for precise targeting and attribution.

Google Analytics to BigQuery: Export Scenarios and Use Cases

GA4 data in BigQuery unlocks analytics beyond standard reports. See real scenarios: cohort analysis, ML recommendations, and cross-platform attribution.

Inventory Analytics: Classification, Safety Stock, Consignment, and the KPIs That Matter

How to use data analytics for inventory classification, safety stock calculation, consignment stock management, and the KPIs that separate reactive planning from strategic inventory control.

Automate Excel and Google Sheets Reports with BI Tools

Still pasting data into spreadsheets weekly? Power BI, Tableau, and Domo automate reporting with live connections and scheduled refreshes. Here's how.

Apache Kafka vs Storm vs Flink: Real-Time Processing Compared

Compare Apache Kafka, Storm, and Flink for real-time data processing. Evaluated across throughput, latency, fault tolerance, and best-fit use cases.

Automate Power BI Dashboards: Stop Manual Excel Reporting

Manual Excel reporting wastes hours weekly. Automate Power BI dashboards with proper data pipelines, scheduled refreshes, and a scalable architecture.

Data Quality for Analytics: How Bad Data Undermines Your Insights

Poor data quality silently corrupts analytics. Learn key quality dimensions, common failure points, and validation strategies that protect your decisions.

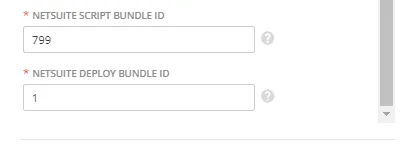

How to Connect NetSuite to Domo: What the Documentation Does Not Tell You

A practical guide to integrating NetSuite with Domo. We cover the Domo bundle installation, the SuiteAnalytics connector setup, the parameters nobody explains clearly, and the data sync bug that will cost you days.

Data Engineering FAQs

Data engineering services involve building and maintaining the infrastructure that enables organizations to collect, store, process, and analyze data at scale. This includes designing ETL pipelines, data warehouses, and data lakes.

You face complex data integration challenges across multiple systems.

You need to build or optimize data infrastructure for scale.

Your data processes are manual, error-prone, or slow.

You want to automate data processing and analytics workflows.

You lack in-house expertise in advanced data technologies.

Outsourcing offers diverse expertise, cross-industry experience, flexible engagement models, and scalability without the commitment of full-time hires. It's ideal for specialized data engineering skills.

We use Python, Alteryx, KNIME, DBT, Apache Airflow for ETL. For data storage: BigQuery, Redshift, Snowflake, PostgreSQL, MongoDB, and more. For cloud: AWS, Azure, and Google Cloud.

It depends on your industry and existing tools. GCP integrates well with Google Analytics and BigQuery. AWS offers comprehensive services like Redshift and SageMaker. Azure excels in enterprise security and Microsoft ecosystem integration.

We implement rigorous data governance frameworks covering accuracy, completeness, and consistency. Our ETL processes include validation checks, mandatory fields, data cleaning, and regular data refresh schedules.

Affiliate Marketing, FMCG, Healthcare, Manufacturing, Transportation, Logistics, SaaS, Media, Advertising, Retail, and E-commerce. Visit our industries page to see detailed solutions and case studies for each vertical.

You retain ownership of architecture and data. We use SSH keys, VPNs, IP whitelisting, OAuth APIs, and encryption. We align with GDPR and CCPA.

We offer two engagement models with transparent pricing.

On-Demand Expertise

All work is tracked and billed monthly at hourly rates:

- AI Agents Development and Implementation - $100/hr

- Data Engineering & Database Administration - $110/hr

- Business Intelligence Reporting - $90/hr

- Data Science - $120/hr

Reserved Capacity Agreement

- Pre-purchase a 50-hour monthly package at $4,500 (10% savings)

- Guaranteed priority availability regardless of our workload

We also offer project-based pricing for well-defined engagements.

Contact us to discuss the best fit for your needs.

A single table integration can take 1-2 hours. Comprehensive projects like building a data warehouse for multiple departments may span several months. We scale resources to meet deadlines.