There is a recurring question we get from clients evaluating AI agents: "Should we use LangGraph, or should we use OpenClaw?" It is the wrong framing. The two frameworks are not competing for the same workflows. They sit at two ends of a spectrum, and most production systems eventually use both.

This article walks through how we choose, and why the decision usually comes down to a single property of the workflow: how predictable are the steps?

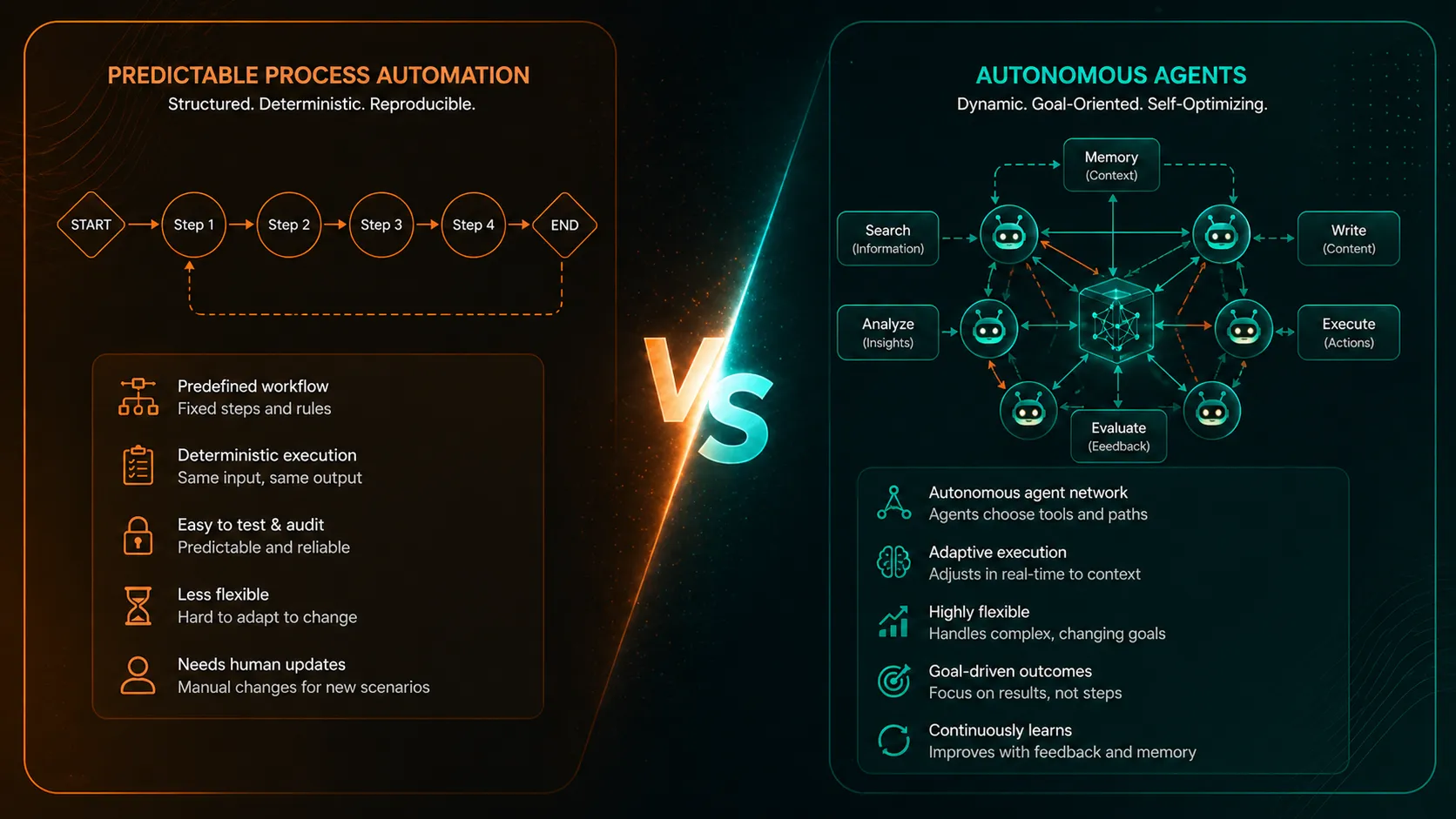

The spectrum

On one end, you have predictable process automation. The steps are known in advance. The order of operations is known. The exceptions are known and routed to known humans. Audit and reliability are non-negotiable. This is most of the work that lives inside an enterprise: order-to-cash, reconciliation, content publishing pipelines, lead routing, expense approval, ETL with human review.

On the other end, you have open-ended autonomous work. The agent does not know the steps in advance. It has to figure out what to read, what to try, and when to stop. The work might span dozens of files, hundreds of tool calls, or completely different systems on each run. Examples: exploratory analytics, code refactors across many files, drafting long-form content from scratch, multi-system reconciliation where the path is genuinely unpredictable.

LangGraph is built for the first end. OpenClaw and similar autonomous agents are built for the second.

Why LangGraph wins predictable workflows

LangGraph models a workflow as a state machine: nodes for each step, edges for the transitions, and a typed state object that captures everything the agent needs to remember.

That is a deceptively simple idea, and it pays off in production:

- Steps are explicit. Anyone can read the graph and understand what the agent does. No reading a model's mind.

- Audit is first-class. Every node call, tool invocation, and state transition is logged. Compliance is happy.

- Human-in-the-loop is native. A node can interrupt the workflow, wait for human approval, and resume without losing state.

- Retries and durable execution. If a step fails, it retries. If the process crashes, it resumes. If the workflow takes hours or days, that is fine.

- Model-agnostic. Each node can call Gemini, Claude, GPT, or a small local model. Routine nodes can use cheaper models without rewriting anything.

When the workflow is "do A, then ask the model to decide B, then call tool C, then either D or E, then wait for a human to approve, then write to the system," LangGraph is the framework that makes that boring, reliable, and cheap to operate.

Most of the work we deploy at clients - reconciliation in supply chain, AI content operations under human review, internal copilots over BI - lives here.

Why OpenClaw earns its keep on the other end

OpenClaw is positioned as a personal AI assistant: an autonomous agent that figures out what to do, what tools to use, and when to stop. The autonomy is the feature, not a side effect.

The right way to deploy it for an organization is not on a laptop. We put it in isolated VMs with sandboxing, scoped tools, and human approval for high-impact actions. With that scaffolding in place, OpenClaw earns its keep precisely where LangGraph would be painful:

- Open-ended exploration. "Look across these systems, find the inconsistencies, propose a fix." There is no fixed graph for that.

- Multi-file code or content work. The agent has to read many files, decide what to change, and iterate. Modeling that as a state machine is overkill.

- Workflows where the steps depend on what the agent finds. Branching is so dynamic that writing it as a LangGraph would be writing the agent twice, once in code and once in prompts.

If we tried to model these as deterministic LangGraph workflows, we would either fight the framework or end up with a graph so generic that it becomes "call the model, hope for the best." That is the moment to use OpenClaw instead.

How we actually choose

When a client comes to us with a workflow they want to automate, we ask three questions:

- Are the steps predefined? If yes, lean LangGraph. If no, consider OpenClaw.

- Does audit and reliability matter more than autonomy? If yes, lean LangGraph. If autonomy is the point, OpenClaw.

- Is there a deterministic backbone with exploratory pockets? Then use both. LangGraph for the spine, OpenClaw or a similar autonomous agent for the open-ended sub-tasks.

We have never built a serious production system that was pure LangGraph or pure OpenClaw. The interesting workflows always have both characters: a predictable part that benefits from a state machine, and a hairy part where giving the agent room to think is the whole point.

The wrong question, reframed

"LangGraph or OpenClaw" is the wrong question because it assumes one tool needs to win. The right question is: which parts of this workflow should be predictable, and which should be autonomous?

Once you have that map, the framework choice almost makes itself. The predictable parts go into LangGraph nodes with proper state, retries, and human checkpoints. The autonomous parts run as sandboxed OpenClaw agents inside isolated VMs, with audit logging and approval routing. Grounded data access on Google Cloud goes through Vertex AI and the Gemini Enterprise Agent Platform when clients are standardized there. Frontier reasoning calls go to whichever model wins on quality and cost for that specific node.

That is what we mean when we say architecture fit, not framework fashion. If you want help applying that thinking to your workflows, our AI Agent Development practice is built around it.