Everyone is excited about connecting Claude, ChatGPT, and other AI agents to business tools.

Connect the agent to HubSpot. Connect it to the invoicing platform. Connect it to your ERP, GA4, Search Console, warehouse, dashboards, and internal systems. Then ask it to find problems, prepare tasks, summarize customers, flag risks, or recommend actions.

That sounds like the future of business automation.

It may also be where the wrong answer starts.

The hidden problem is simple: access to business applications is not the same as access to business truth. APIs and MCP servers can help an AI agent reach your systems. They do not automatically teach the agent what your company means by customer, revenue, margin, valid billing data, true growth, or operational risk.

That is why AI agents can still get business questions wrong, even when they are connected to the right tools.

The problem is not just data access

Most business automation conversations start with access.

Can the agent read the CRM? Can it check invoices? Can it inspect tickets? Can it query the warehouse? Can it open dashboards? Can it call a SaaS tool through an MCP server?

Those are useful questions, but they are not the most important ones.

The more important question is: does the agent know which business definition it should trust?

Imagine asking an AI agent:

"Find new customers in HubSpot that do not have valid billing data in our invoicing system."

This sounds straightforward. It is not.

The agent needs to know what "new customer" means. Is it a closed-won deal, a signed contract, a first order, an activated account, or a customer that has completed onboarding?

It also needs to know what "valid billing data" means. Is a billing email enough? Do you need a VAT number, billing address, purchase order requirement, legal entity match, payment term, tax status, or currency?

It needs to understand timing. Should the check happen on close date, activation date, first delivery date, invoice date, or revenue recognition date?

It needs to understand exceptions. Some customers may be billed through partners. Some may require manual finance review. Some may be under a parent account. Some may intentionally be blocked until compliance is complete.

If those rules are not already defined somewhere reliable, the agent has to infer them. It can produce a neat task list and still be wrong.

MCP vs API is only part of the story

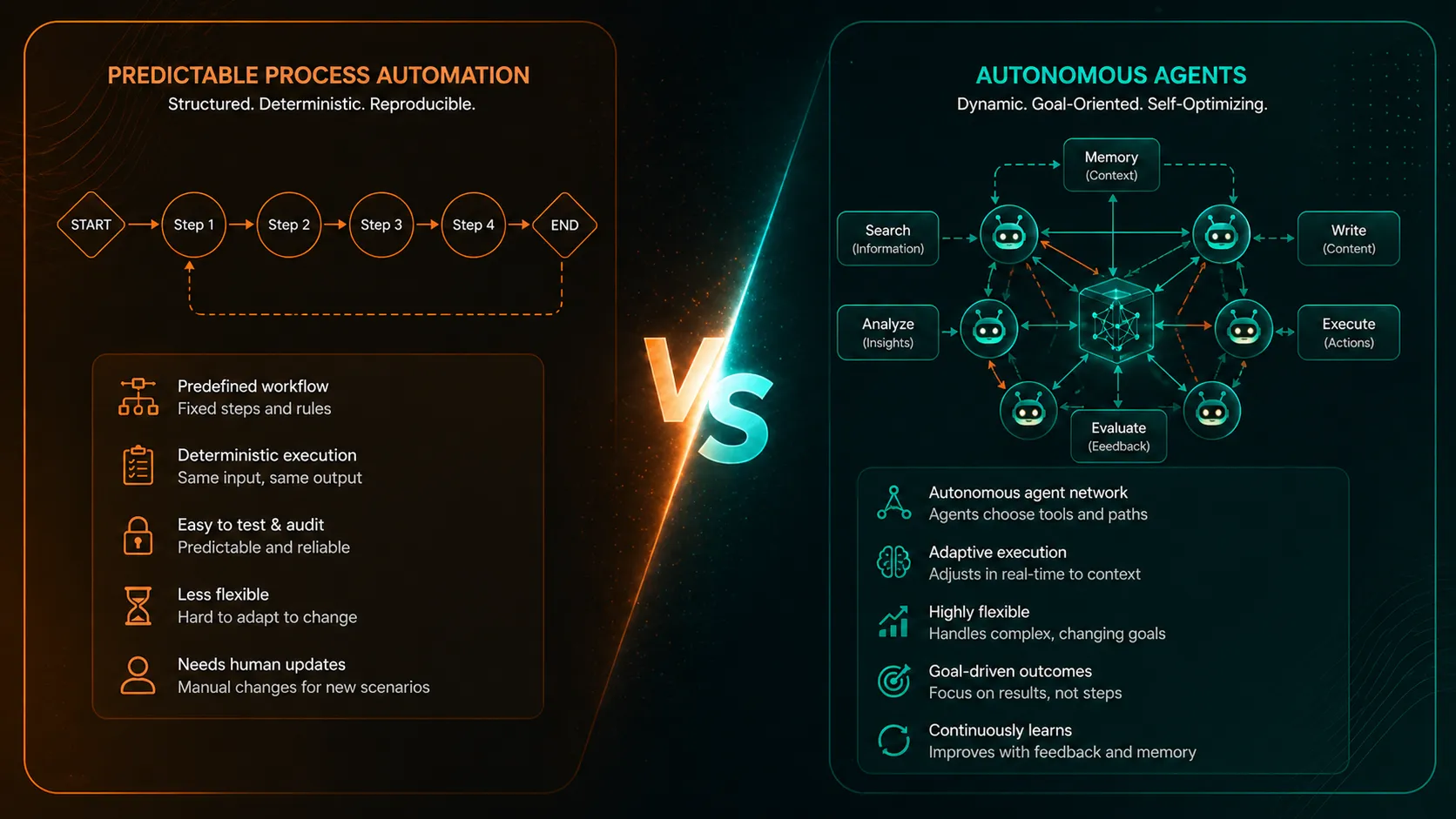

MCP vs API is a useful technical conversation, but it is not the real business issue.

An API lets software systems expose data and actions. An MCP server helps AI agents discover and use tools. In many cases, MCP sits on top of APIs and makes business systems easier for an AI assistant to call.

That matters. But it does not solve the business-definition problem.

If an MCP server exposes the same raw application objects that an API exposes, the agent still has to decide how to interpret them. It still has to decide which records count, which records should be excluded, how systems should be reconciled, and which definition should win when tools disagree.

MCP can improve the way an agent uses tools. It does not automatically make the answer trustworthy.

Simple lookups are not the same as business analysis

Some AI-agent tasks are low risk because they are narrow and current-state oriented.

For example:

- Does this account have a billing email?

- Which sales owner is assigned to this customer?

- Is this invoice marked overdue?

- What was the latest support ticket about?

Those are lookup questions. The agent is mostly retrieving a known field or summarizing a bounded record.

Business questions are different:

- Are sales up year to date versus the same period last year?

- Which customer segments are becoming less profitable?

- Did margin improve after the pricing change?

- Which products are growing without healthy contribution margin?

- Which suppliers are creating persistent delivery risk?

- Which marketing pages actually grew, rather than receiving migrated traffic?

These are analytical questions. They require definitions, time periods, exclusions, comparisons, reconciliations, and context.

There is a reason most SaaS APIs do not expose answers like "year-to-date sales versus previous year-to-date by customer segment, adjusted for returns, cancellations, currency, discounts, and attribution." That answer is normally calculated in a data warehouse, BI model, finance report, approved dashboard, or semantic layer.

It is not usually calculated safely by pulling a few live API endpoints during a conversation.

The risk grows with the time period

The longer the period being analyzed, the more ways an AI agent can get the answer wrong.

A question about a single current customer may depend on a few fields. A question about a quarter, year-to-date period, or multi-year trend may depend on historical changes, late-arriving data, duplicate records, migrated IDs, returns, cancellations, currency conversion, channel attribution, changed definitions, and finance adjustments.

That is why strategic questions are more fragile than operational lookups.

An AI agent may be able to tell you what a CRM record says today. That does not mean it can correctly calculate how customer profitability changed over the last twelve months.

An agent may be able to list invoices from a billing tool. That does not mean it can correctly answer whether all delivered work has been billed under customer-specific contract terms.

An agent may be able to read traffic numbers from analytics tools. That does not mean it can identify true organic growth after redirects, tracking changes, paid leakage, and branded demand are excluded.

The bigger the business question, the more dangerous it becomes to let the agent assemble the metric from raw tool access.

Fluent answers can hide weak assumptions

AI agents often fail in a way that looks successful.

They find data. They summarize it. They produce a confident answer. They may even create a task list, draft an email, or recommend an action.

The problem is that the answer may be based on the easiest interpretation of the available data, not the approved interpretation used by the business.

That is especially dangerous because fluent language creates confidence. A spreadsheet with a broken formula looks suspicious. A polished AI answer can look authoritative even when the metric underneath it was improvised.

The failure is not always technical. Often, the tool call worked. The API responded. The MCP server returned data. The agent completed the task.

The failure is that the wrong business definition was used.

The agent should not invent the metric

There is a simple test for AI-agent readiness.

If two experienced people in your company would calculate the answer differently, an AI agent should not calculate it from raw application access.

The definition should already exist before the agent is allowed to act on it.

Your company should know how it defines:

- New customer.

- Active customer.

- Valid billing data.

- Recognized revenue.

- Gross margin.

- On-time delivery.

- Supplier risk.

- True organic growth.

- Year-to-date sales.

Those definitions may live in BI models, warehouse tables, finance reports, approved dashboards, internal operating rules, or documented processes.

The important point is not where they live. The important point is that they should not be invented for the first time inside an AI response.

More tools are not the answer

When an AI agent gives a weak answer, the instinct is often to give it more access.

Connect another system. Add another MCP server. Expose another dashboard. Give it another API.

Sometimes that helps. Often it just gives the agent more fragments to reconcile.

If the company has not defined the business answer, more access can make the answer sound more informed without making it more correct.

The better question is not "what else can the agent access?"

The better question is "which answers are already trusted enough for the agent to use?"

For many companies, this means the AI-agent conversation eventually becomes a data conversation. Before an agent can safely automate work, the business needs reliable definitions, reconciled data, approved metrics, and clear rules about which actions require human review.

What business leaders should watch for

AI agents are useful. They can help teams move faster, summarize complex records, monitor predefined conditions, prepare drafts, and route work for review.

But leaders should be cautious when an agent is asked to answer questions involving:

- Historical comparisons.

- Year-to-date or previous-period performance.

- Profitability or margin.

- Billing readiness or invoice completeness.

- Customer status across multiple systems.

- Attribution, refunds, cancellations, or exclusions.

- Supplier, delivery, manufacturing, or operational risk.

- Any action that affects customers, revenue, finance, or compliance.

Those workflows may still be good candidates for AI assistance. They just need trusted business definitions behind them.

MCP and APIs can connect the agent. They cannot define the business.

MCP servers and APIs are part of the infrastructure for AI automation. They help agents use tools, read systems, and trigger workflows.

But they do not replace the work of defining business truth.

If the business definition is unclear, the AI agent will either ask for clarification, make an assumption, or produce an answer that looks precise but is not reliable.

That is the hidden data problem behind AI agents in business automation.

The question is not only "can Claude connect to our tools?"

The question is "are the answers already defined well enough for Claude to use?"

AI agents should act on validated business answers, not improvise business logic from application access.