Between 60% and 80% of AI projects never make it to production. Not because the algorithms are wrong, but because the data feeding them is unreliable, incomplete, or inaccessible. Organizations rush to adopt machine learning and AI in pursuit of a competitive edge, only to discover they skipped the foundational steps that make these technologies work.

This guide provides a practical framework for evaluating your organization's data maturity and a clear, step-by-step path from raw data collection to successful AI deployment.

Why Most AI Initiatives Fail

The typical pattern looks like this: leadership reads about AI transforming an industry, hires a data scientist or purchases an AI platform, then discovers months later that the data needed to train models is scattered across spreadsheets, siloed databases, and inconsistent formats.

The core issue is not a technology gap. It is a maturity gap. AI and machine learning require a level of data discipline that most organizations have not yet built.

Common failure patterns include:

- No centralized data: Customer records live in one system, sales data in another, and operational metrics in a spreadsheet someone emails around weekly.

- Poor data quality: Duplicate records, missing fields, inconsistent naming conventions, and outdated entries make any analysis unreliable.

- No baseline metrics: If you cannot measure current performance accurately, you have no way to evaluate whether an AI model improves anything.

- Jumping to prediction before understanding: Teams attempt predictive models before they have mastered simple descriptive reporting.

The Five Levels of Data Maturity

Think of data maturity as a staircase. Each level builds on the one before it, and skipping levels creates an unstable foundation.

Level 1: Ad-Hoc Data Collection

Where you are: Data exists but is scattered. Teams rely on manual exports from various tools. There is no single source of truth. Reporting is inconsistent and depends on who pulls the numbers.

Signs: Different teams report different revenue figures for the same period. Nobody trusts the data. Reports take days to produce and are outdated by the time they are shared.

What to focus on: Identify your core data sources and begin consolidating them. Start with the most critical business metrics and build a simple, reliable way to track them.

Level 2: Structured Collection and Storage

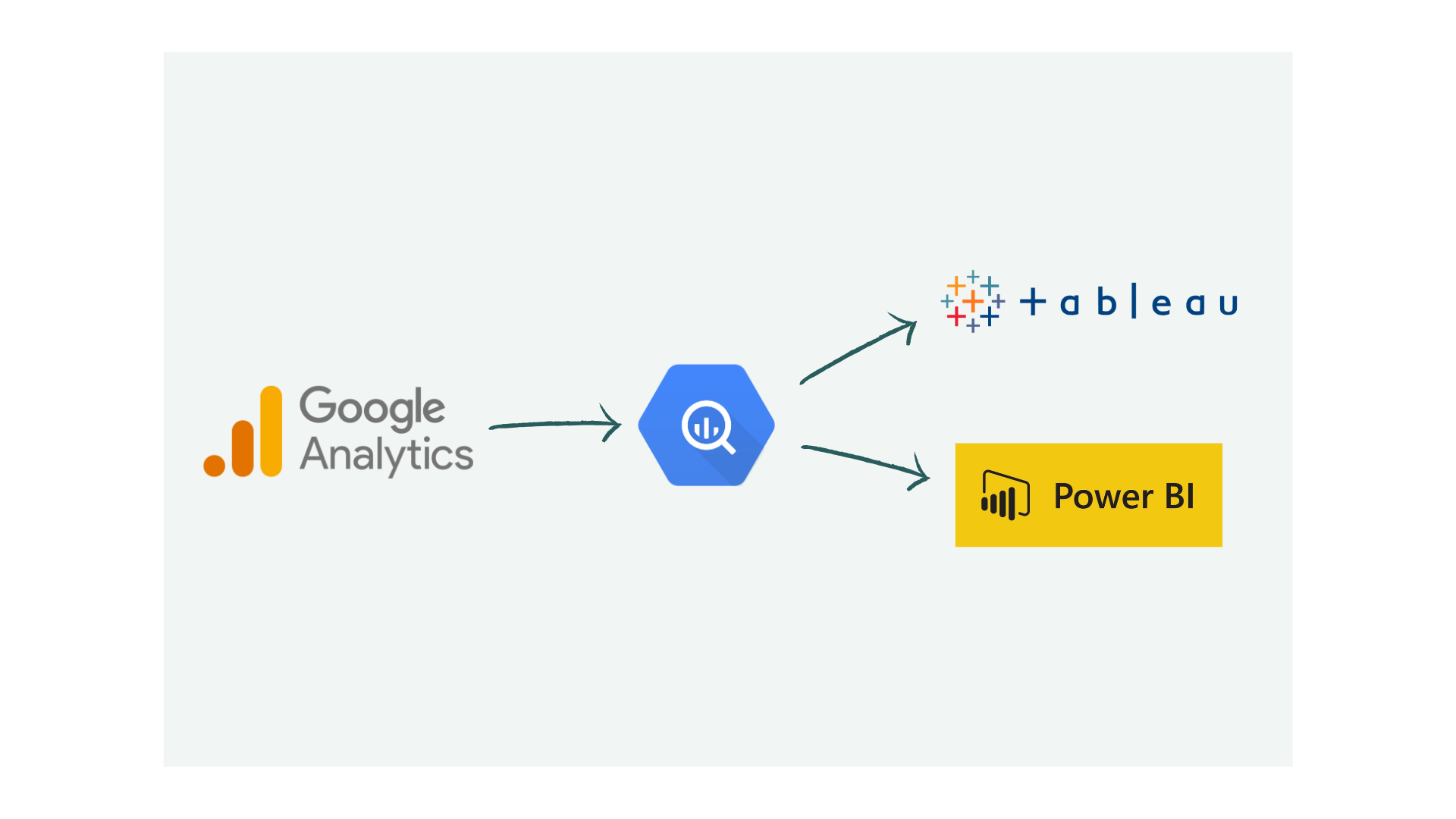

Where you are: Data is flowing into a central repository, whether that is a data warehouse, a database, or a structured cloud storage solution. Collection is automated rather than manual.

Signs: You have automated data pipelines. There is a defined process for how data enters your systems. Basic data quality checks are in place.

What to focus on: Establish data quality standards and governance. Define who owns which data, what the acceptable quality thresholds are, and how data errors are resolved.

Level 3: Descriptive Analytics and Reporting

Where you are: You can reliably answer "what happened?" Your dashboards and reports update automatically and stakeholders trust them. KPIs are well-defined and consistently measured.

Signs: Leadership makes decisions based on dashboards rather than gut instinct. You can pull accurate historical data going back at least 12 months. Teams share a common definition of key metrics.

What to focus on: Build diagnostic capabilities. Move from "what happened" to "why did it happen" by adding drill-down capabilities, segmentation analysis, and root-cause reporting.

Level 4: Diagnostic and Predictive Analytics

Where you are: You can diagnose why performance gaps exist and identify the top factors driving outcomes. Statistical models help you forecast trends. You are beginning to test hypotheses with data.

Signs: You routinely identify the top 5-10 factors that impact your KPIs. Forecasting models exist for revenue, demand, or customer behavior. A/B testing is part of your decision-making process.

What to focus on: Begin targeted AI pilots. Choose specific, well-defined problems where you have clean historical data and a clear success metric.

Level 5: AI and Machine Learning at Scale

Where you are: AI models are deployed in production systems, making or supporting real-time decisions. Models are monitored, retrained regularly, and integrated into operational workflows.

Signs: Recommendation systems personalize customer experiences. Predictive maintenance reduces downtime. Automated anomaly detection flags issues before they escalate.

The AI Readiness Checklist

Use this checklist to honestly assess where your organization stands. Score each item as Yes (1) or No (0).

Data Foundation (Levels 1-2)

- We have a centralized data storage solution (data warehouse or data lake)

- Data from our core systems flows automatically into this central store

- We have defined data quality standards and regularly audit compliance

- There is a documented data governance policy with clear data ownership

- Historical data spans at least 12 months for our key business metrics

- Data formats and naming conventions are standardized across teams

Analytics Capability (Levels 3-4)

- Operational dashboards are trusted and used for daily decision-making

- KPIs are formally defined and consistently measured across the organization

- We can diagnose root causes of performance changes, not just report them

- Statistical analysis or forecasting is used for at least one business function

- We have at least one analyst or team skilled in SQL, Python, or R

AI Readiness (Level 5)

- We have identified specific use cases where AI could measurably improve outcomes

- For each use case, we have clean, labeled training data available

- We have defined success criteria that go beyond "implement AI"

- There is executive sponsorship and a realistic budget for sustained investment

- A plan exists for monitoring model performance and retraining over time

Scoring: 0-5 points means start at Level 1. 6-10 points means strengthen your analytics foundation. 11-15 points means you are genuinely ready for AI pilots.

A Step-by-Step Path to AI Deployment

Step 1: Identify Key Initiatives and KPIs

Before deploying any advanced technology, identify the business processes that would benefit most from improvement. Use techniques like process mapping to find areas of greatest impact.

Supply chain example: A manufacturer identifies the need to reduce inventory carrying costs while maintaining 95% on-time delivery. Baseline KPIs include inventory turns, order lead time, and fill rate.

Sales example: A SaaS company wants to reduce churn from 8% to 5%. Baseline KPIs include customer health score, usage frequency, support ticket volume, and renewal rate.

Step 2: Build the Data Foundation

Implement data collection, storage, and automated reporting for your baseline KPIs. This is where most organizations should spend the majority of their initial investment.

- Connect data sources into a centralized system

- Automate data pipelines to eliminate manual data handling

- Build dashboards that stakeholders trust and actually use

- Run data quality audits monthly until quality is consistently high

Step 3: Diagnose Before You Predict

Once you have reliable baseline reporting, use diagnostic analysis to identify the biggest obstacles to reaching your targets.

Supply chain example: Diagnostic reports reveal that 3 out of 40 product categories account for 60% of excess inventory, driven by inaccurate demand forecasts during seasonal peaks.

Sales example: Customer behavior analysis shows that customers who do not engage with onboarding resources within the first 14 days have a 4x higher churn rate.

Step 4: Deploy AI on Targeted Problems

With diagnosed root causes, you can now deploy AI where it will have the greatest measurable impact - not everywhere at once, but on specific, high-value problems.

Supply chain example: A demand forecasting model trained on historical sales, seasonality, and promotional data reduces forecast error for those 3 problematic categories by 35%.

Sales example: A churn prediction model identifies at-risk accounts 30 days before renewal, triggering automated and personalized re-engagement workflows.

Red Flags That You Are Not Ready

If any of the following are true, invest in your data foundation before pursuing AI:

- "We need AI to figure out what is going on." AI does not replace understanding. If you cannot explain your current business performance with simple reports, a machine learning model will not help.

- "Our data is a mess, but AI can clean it." AI trained on bad data produces bad predictions. Garbage in, garbage out still applies.

- "Our competitor is using AI, so we need it too." Competitive pressure is not a strategy. Define the specific business outcome you want to improve first.

- "We just need to hire a data scientist." One person cannot build the data infrastructure, create the reporting layer, diagnose business problems, and deploy production ML models. You need the foundation first.

The Bottom Line

AI and machine learning are not magic. They are tools that amplify the quality of your data infrastructure and the clarity of your business questions. Organizations that build a strong data foundation - proper collection, reliable storage, trusted reporting, and diagnostic analysis - position themselves to extract real value from AI. Those that skip these steps end up with expensive experiments that never reach production.

If your checklist score tells you that you are not ready, that is not a failure. It is a starting point. The path from ad-hoc data to AI-driven decisions is well-understood, and every step along the way delivers standalone value to your business.

Need help assessing your data maturity or building the foundation for AI? Our analytics strategic consulting team helps organizations at every stage of this journey.