Python Data Engineering & Analytics

The engine behind every automated dashboard

We build Python-based data pipelines that connect your APIs, databases, and SaaS platforms into automated data flows. Your BI tools get clean, fresh data without anyone downloading a CSV.

Book a Free Consultation

Python Services We Deliver

ETL Pipeline Development

We build Python-based ETL/ELT pipelines that extract data from APIs, databases, files, and SaaS platforms, transform it, and load it into your data warehouse on automated schedules.

API Integration & Automation

Custom Python scripts that connect your CRM, ERP, marketing platforms, affiliate networks, and third-party APIs into a unified data flow. REST, GraphQL, webhooks - we handle them all.

Data Transformation & Cleaning

We write Python transformations that flatten nested data, clean inconsistent records, deduplicate, validate, and standardize your data before it reaches your BI tools.

Database & Warehouse Loading

Automated data loading into BigQuery, Snowflake, PostgreSQL, MySQL, or MongoDB. We handle upserts, incremental loads, schema evolution, and data validation.

Machine Learning & Data Science

Predictive models, segmentation, recommendation engines, and demand forecasting using scikit-learn, XGBoost, and TensorFlow. We build models that integrate directly into your business workflows.

Scripting & Task Automation

Automate repetitive data tasks: scheduled reports, file processing, data validation checks, alert systems, and cross-system synchronization. Eliminate manual work your team does every week.

Cloud Function Deployment

We deploy Python scripts as serverless functions on GCP Cloud Functions, AWS Lambda, or Azure Functions. Pay only when your code runs, with automatic scaling.

Code Review & Optimization

Audit your existing Python data code for performance, reliability, and maintainability. We refactor slow scripts, add error handling, logging, and monitoring.

Python in Action: Case Studies

Real projects where Python data engineering delivered measurable outcomes.

Showing 8 case studies

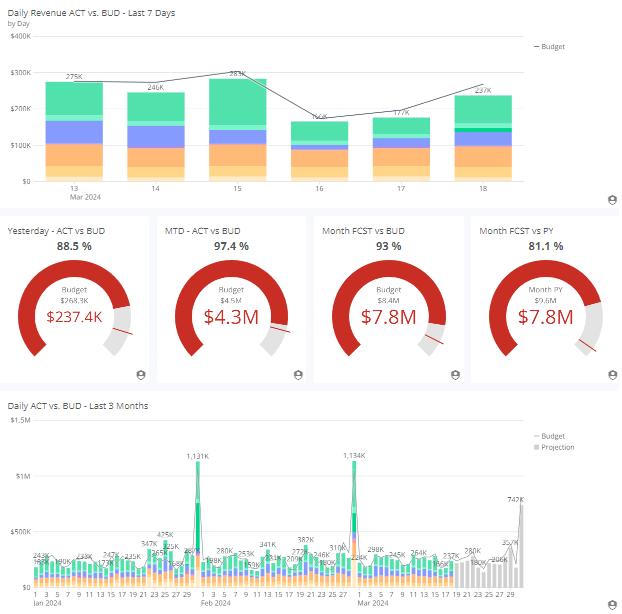

3PL Digital Transformation: Data Analytics & Automated Invoicing

Discover how a U.S. 3PL company eliminated revenue leakage, automated invoicing, and gained real-time margin visibility.

Read case study →

Affiliate Marketing Dashboards: Unified Performance Tracking

Learn how affiliate teams replaced spreadsheets with unified dashboards to track performance across networks, identify profit leaks, and optimize ROAS.

Read case study →

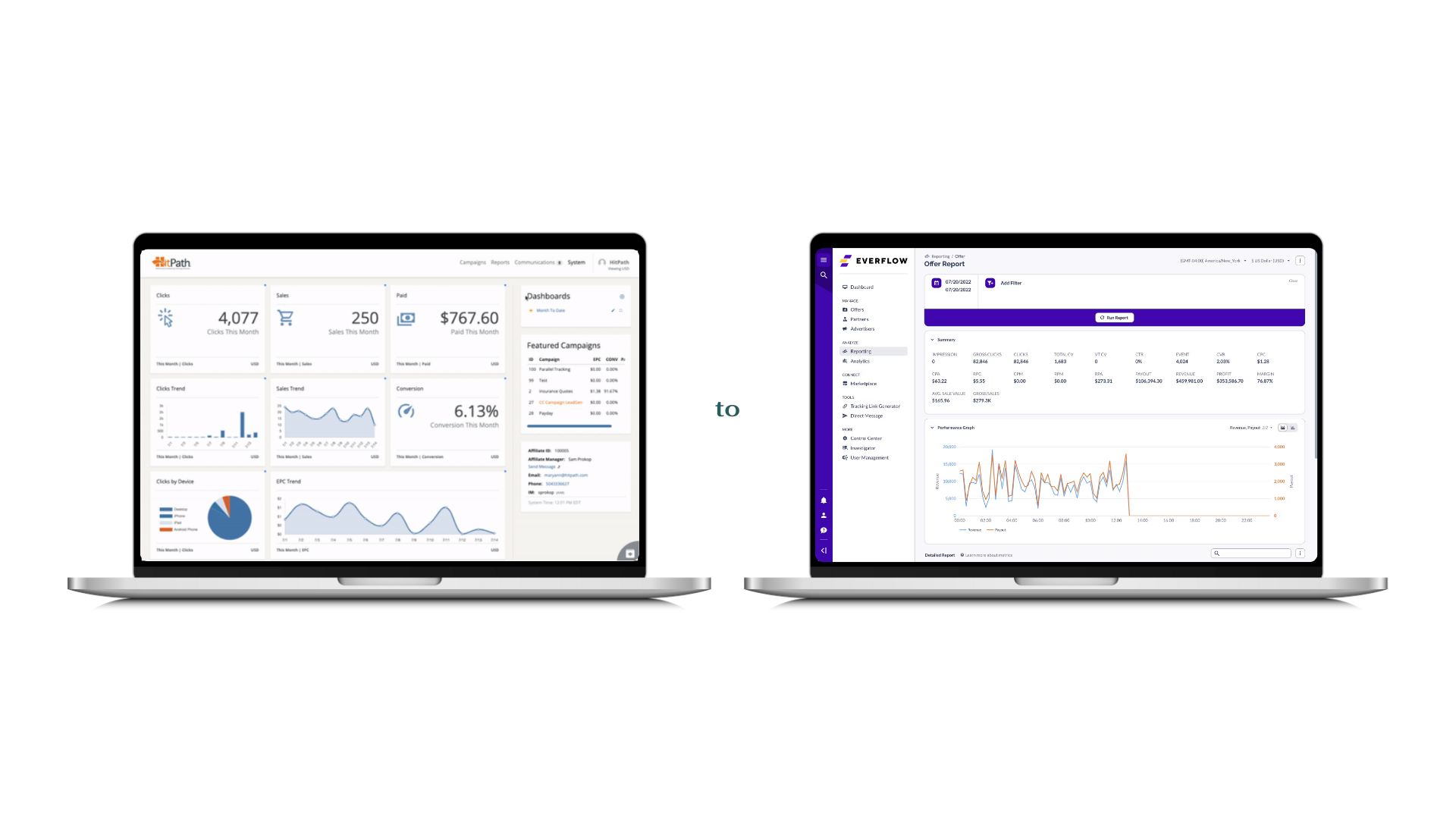

HitPath to Everflow BI Migration for Affiliate Tracking

Unified HitPath and Everflow data into one BI system with migration monitoring dashboards, ensuring reporting continuity throughout the platform transition.

Read case study →

Email Marketing Data Management for Affiliate Publishers

How we helped email marketing publishers manage high-volume campaign data with scalable pipelines, centralized storage, and automated BI reporting.

Read case study →

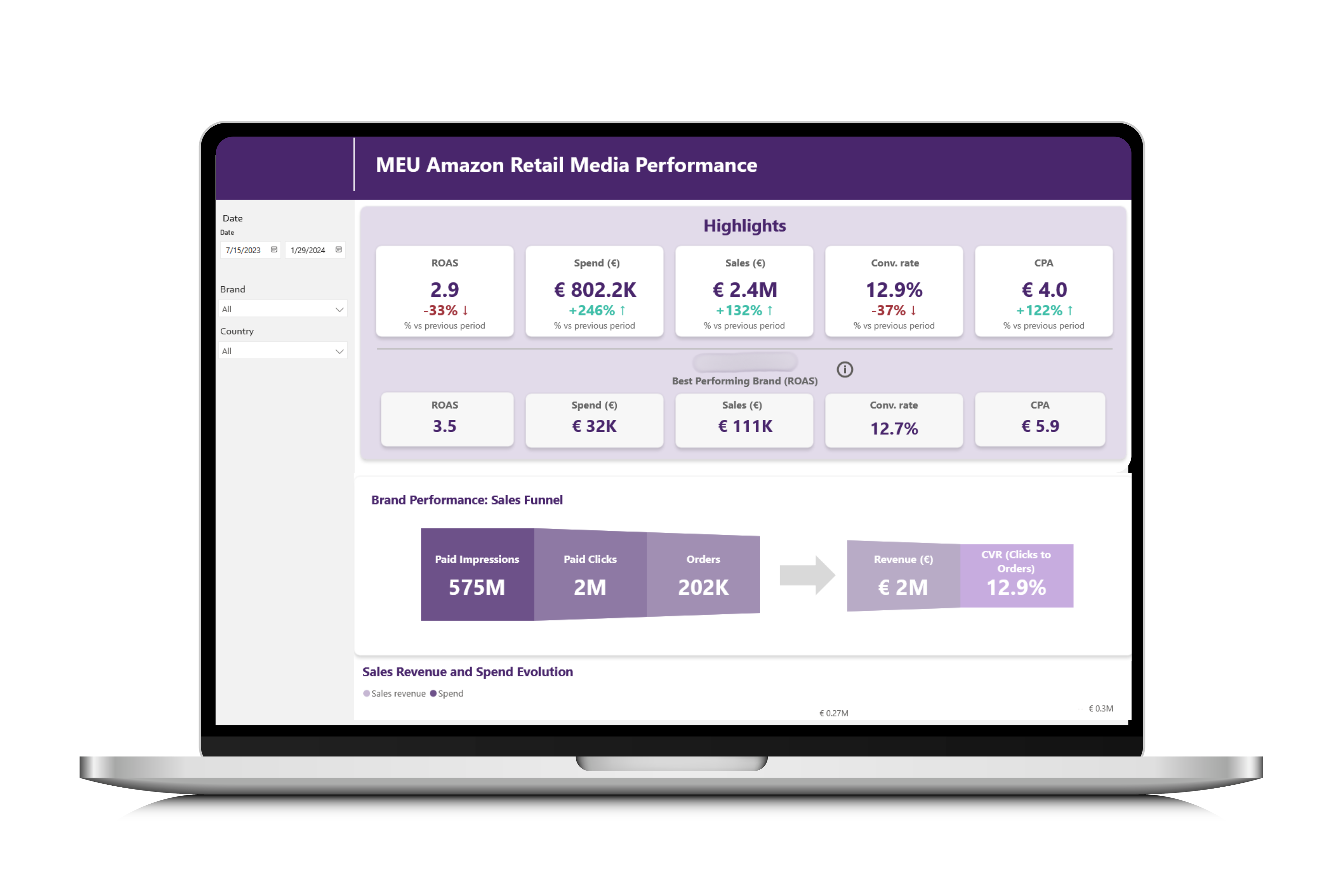

Amazon Ads Reporting with Power BI for Food & Beverage

How a snack manufacturer replaced manual Amazon Ads tracking with automated Power BI dashboards to optimize spend, measure ROAS, and guide marketing decisions.

Read case study →

Car Rental Marketplace BI: Startup Analytics with Power BI

We built the analytics stack for a car rental marketplace using MongoDB, Python, MariaDB, and Power BI to centralize operations and enable data-driven growth.

Read case study →

BI Finance Reporting for a Multinational Affiliate Network

Automated multinational financial consolidation for a US affiliate network. Replaced manual spreadsheets with dynamic BI dashboards for revenue and margins.

Read case study →

Marketing Automation with RFM Segmentation for a Coffee Chain

How we helped a coffee shop chain connect POS data, build RFM segmentation, and automate SMS campaigns that reduced churn by 10% and grew revenue 12%.

Read case study →Struggling with Data Pipelines?

- Your team manually downloads CSV exports from SaaS platforms and uploads them to your database every day.

- API data from your CRM, affiliate platform, or marketing tools never makes it into your dashboards.

- You have data in MongoDB or NoSQL databases that your BI tool cannot connect to directly.

- Data transformations are done in Excel because nobody on the team writes code.

- Your existing Python scripts are fragile, undocumented, and break without warning.

- You need a machine learning model but do not have data scientists on staff.

Why Choose Witanalytica for Python?

Production-Grade Python

We write Python that runs in production, not notebooks. Proper error handling, logging, retry logic, configuration management, and deployment pipelines.

Built for Small IT Teams

We design pipelines your sysadmin or IT director can monitor and maintain. Clear documentation, simple architecture, and alerting that works without a dedicated DevOps team.

Full-Stack Data Context

We do not just write scripts. We understand data warehouses, BI tools, and business metrics. Our Python code is designed to feed the dashboards your team actually uses.

Cost-Efficient Architecture

Serverless functions, scheduled jobs, and pay-per-use cloud services. We avoid over-engineering and select the simplest architecture that handles your data volumes reliably.

Our Python Pipeline Delivery Process

We inventory your data sources, document the transformations needed, define output schemas, and map the end-to-end data flow from source to dashboard.

We decide the pipeline architecture: orchestration tool, scheduling strategy, cloud vs. on-prem, and error handling patterns. We design for your team's ability to maintain it.

We build the extraction, transformation, and loading scripts in Python, with proper logging, retry logic, and data validation at each stage.

We validate output data against source systems, test edge cases, verify incremental loads, and ensure data quality checks catch issues before they reach your dashboards.

We deploy pipelines to your cloud environment, configure scheduling (Cloud Scheduler, cron, Airflow), and set up alerts for failures.

We document the pipeline architecture, data flow, configuration, and troubleshooting steps. Your team or sysadmin can maintain it without needing a data engineer on staff.

We inventory your data sources, document the transformations needed, define output schemas, and map the end-to-end data flow from source to dashboard.

TESTIMONIALS

Witanalytica has been an excellent partner in managing and optimizing our Tableau environment. Their team’s technical expertise and proactive support have streamlined our reporting processes, improved dashboard performance, and provided valuable insights to our business. Their responsiveness and deep understanding of data analytics make them a trusted extension of our own team.

Mark Lack

Director of Data Analytics and AI, The Ubique Group

Witanalytica helped us transition from Excel to a dynamic dashboard, allowing us to view all the relevant data and the KPIs that we track as a business. Instead of having our developers code an interface for weeks, we can now instantly accomplish this process through an interface, eliminating the need for manual coding.

Radu Albastroiu

Startup Founder, masinilacheie.ro

Witanalytica’s expertise in big data engineering and visualization complements our digital media audit and customer analytics services. Collaborating with them allows us to deliver end-to-end analytics solutions and services, without the risks and investments associated with building these capabilities in-house.

Silviu Toma

Senior Partner, Microanalytics

Working with Witanalytica has been a consistently positive experience. They are responsive, professional, and approach every revision with patience and precision. What sets them apart is a strong understanding of supply chain management, inventory planning, and sales operations, which makes collaboration efficient and ensures deliverables align with real business needs. They have also worked effectively across multiple departments in our organization and manage a 6-7 hour time zone difference seamlessly. I would confidently recommend them to any organization seeking a skilled and dependable analytics partner.

Rubin Chen

Supply Chain VP, The Ubique Group

Our Python Data Engineering Pricing Models

Transparent pricing built for long-term partnerships, not one-off transactions.

On-Demand Expertise

All tasks are tracked, and the corresponding invoice of the delivered services is billed monthly.

| Activity | Hourly Rate |

|---|---|

| Data Engineering & Database Administration | $110 |

| Business Intelligence Reporting | $90 |

| Data Science | $120 |

Reserved Capacity Agreement

- Pre-purchase a package of monthly working hours that guarantees reserved capacity and priority availability, regardless of our workload.

- Because this capacity is exclusively allocated to you, unused hours do not carry over to the following month.

| Hours Package | Price |

|---|---|

| Every 50 hours | $4,500 10% savings |

Alternatively, we also offer project-based pricing

For well-defined engagements, we scope the full project upfront and agree on a fixed fee, so you know exactly what to expect.

Your Goals, Our Expertise

We start from your strategic objectives and work our way back to the right mix of solutions and technologies, not the other way round.

Book a Consulting CallData Engineering Resources & Insights

Articles and guides on data pipelines, automation, and analytics engineering.

10 articles

How to Deploy Data Analytics in Manufacturing: From Shop Floor to Boardroom

A practical guide to deploying data analytics in manufacturing logistics and supply chain, built around the five-tier meeting structure that actually runs modern factories. From shift-level dashboards to multi-plant regional operations, powered by a single source of truth.

AI Readiness Checklist: Assess Your Data Maturity First

Most AI projects fail due to poor data foundations. Use this data maturity checklist to assess whether your organization is truly ready to deploy AI.

Self-Service Analytics vs. Professional Analytics: How to Choose the Right Approach

Not every analytics problem needs a data team, and not every problem can be solved with a spreadsheet. This decision framework helps you determine when self-service tools are enough and when you need professional analytics support.

Identity Resolution: Unifying Customer Data for Marketing

Customer data scattered across CRM, email, ads, and web analytics? Identity resolution unifies fragmented profiles for precise targeting and attribution.

Inventory Optimization: MOQ, Safety Stock, and Consignment Analytics

How to use data analytics to calculate optimal order quantities, set safety stock levels, manage consignment inventory, and avoid the twin traps of overstocking and stockouts.

LLMs for Business Leaders: Applications Across Departments

LLMs go beyond chatbots. Learn how business leaders apply them to customer service, marketing automation, HR workflows, and internal knowledge management.

Why Digital Marketing Agencies Need Data Analytics Partners

Marketing agencies excel at campaigns but often lack data engineering depth. Partnering with analytics specialists fills the gap and improves client outcomes.

BI Tools for Financial Reporting: Automate and Consolidate

Spreadsheet-based financial reporting is slow and error-prone. See how Power BI and Domo automate consolidation, enable drill-downs, and speed up close cycles.

Data Analytics Adoption: A 5-Stage Enterprise Framework

Adopting analytics requires more than tools. This framework covers stakeholder alignment, maturity assessment, roadmap planning, and phased implementation.

Structuring a Data Science Department: 3 Org Models Compared

Building a data science team? Compare embedded, centralized, and hybrid structures to find which model fits your company size, culture, and analytics maturity.

Frequently Asked Questions

Python is free, flexible, and runs everywhere. Commercial ETL tools like Alteryx or Informatica cost $5,000-$50,000+/year. For mid-sized companies, Python-based pipelines offer the same capabilities at a fraction of the cost, with more flexibility for custom integrations.

Yes. We design pipelines with monitoring and alerting so your IT team knows when something fails. For ongoing changes, we offer support retainers. Many clients have their sysadmin manage the schedules while we handle code changes as needed.

For ETL: pandas, requests, SQLAlchemy, google-cloud-bigquery, snowflake-connector-python. For ML: scikit-learn, XGBoost, LightGBM, TensorFlow. For orchestration: Cloud Functions, Lambda, or lightweight cron-based scheduling. We pick the simplest tools that get the job done.

Yes. We have built integrations with Everflow, Affise, HitPath, Shopify, Salesforce, HubSpot, Amazon Ads, Square POS, Samsara, and dozens of other platforms. If the platform has an API, we can extract data from it.

We implement exponential backoff, retry logic, and pagination handling. For high-volume APIs, we use incremental extraction so each run only pulls new or changed records, reducing API calls and processing time.

Yes. We build ML models for customer segmentation (RFM), demand forecasting, churn prediction, recommendation engines, lead scoring, and anomaly detection. Models are deployed as scheduled jobs or API endpoints that integrate with your existing systems.

A simple API-to-warehouse pipeline takes 1-2 weeks. A multi-source ETL system with transformations and scheduling takes 3-6 weeks. Complex ML projects may take 2-3 months. We scope everything before starting.

We deploy to GCP Cloud Functions, AWS Lambda, or a simple VM with cron scheduling, depending on your existing infrastructure. Serverless options minimize cost because you only pay when data is being processed.

We offer two engagement models with transparent pricing.

On-Demand Expertise

All work is tracked and billed monthly at hourly rates:

- Data Engineering & Database Administration - $110/hr

- Business Intelligence Reporting - $90/hr

- Data Science - $120/hr

Reserved Capacity Agreement

- Pre-purchase a 50-hour monthly package at $4,500 (10% savings)

- Guaranteed priority availability regardless of our workload

We also offer project-based pricing for well-defined engagements.

Contact us to discuss the best fit for your needs.